Cases of criminals using AI to commit fraud have skyrocketed by an astonishing 1,210% in 2025, outpacing the staggering 195% spike seen in traditional fraud. At the same time, deepfake fraud attempts in the UK have nearly doubled, rising 94% in the same year - second only to France (96%). More than half (51%) of UK adults now fear falling victim to a financial phishing scam, with one in ten having already lost money to one. But now driven by AI-powered scams, fraud is being "supercharged" to record levels nationwidewith more than 1,200 UK incidents every single day and total losses hitting over £629 million in just six months.

However, experts warn of even higher numbers of people falling victims as offences spike in the summer months as fraudsters exploit a surge in online shopping, concert booking, deal-hunting and travel bookings.

Adam Nasli, head broker analyst from BrokerChooser said: "AI scams are becoming more and more sophisticated with fraudsters now able to easily impersonate banks, government officials, brokers and trading platforms with convincing accuracy. Consumers need to equip themselves with the knowledge to distinguish what is real from what is not, as victims can be pushed into rushed investment decisions under the belief that they are speaking to a genuine market expert or receiving legitimate time-sensitive opportunities.

"Losses to investment scams are typically very high as speed, trust and emotional with fear of missing out frequently exploited. Scammers rely on creating pressure to act immediately, whether it's an 'exclusive, insider-only opportunity' or a fake broker urging urgent account verification. Always take a moment to question, test and verify identity of who you're speaking to. If you cannot independently verify their details online, you are most likely dealing with a scam. In today's environment, protecting your capital starts with slowing down the interaction, not speeding it up."

The 'lag trap test'

While AI voice and video systems can sound convincing in short bursts, they often rely on real-time processing that can have tiny delays, especially when conversations become unpredictable. And these subtle delays often become more noticeable when the natural flow of conversation is disrupted.

To test this, Adam said: "Fire off rapid, back-to-back questions that break the natural flow, such as "Can you confirm that?" followed immediately by "Where are you calling from right now?" A real person will adapt instantly, often using natural fillers like 'um' or 'uh', while AI systems are more likely to produce slightly delayed answers or respond as if each question exists independently. These small timing cracks are often the first sign you're not speaking to a human."

Make them prove the room is real

Deepfake videos can simulate a face well, but they can still struggle with real-world spatial awareness and physical interaction. The easiest way to expose this is to ask for small, spontaneous interactions with the environment. Ask for something simple but specific, like "I can't see you properly, can you turn your camera to the left please?" Humans respond instantly and naturally while AI-driven video often breaks under this pressure, with delayed movement, awkward framing or a background that doesn't shift convincingly with the body.

Eyes and lips don't lie

While faces may look convincing at first glance, inconsistencies often appear when you focus on how the eyes and lips work together. For example, lips that move too smoothly or don't fully match spoken sounds are a red flag. Pay close attention to words that require fully closed lips like 'P', 'B' or 'M' as AI struggles with these syllables and often shows the mouth slightly open or failing to close at the right moment. Another giveaway is the blinking. AI-generated blinks can feel off-timing, too consistent or disconnected from the conversation, while real blinking is more irregular and naturally tied to our speech and thought.

Can 'they' get your humour?

AI is increasingly fluent in language but it still struggles with intent, especially sarcasm, irony and emotionally layered humour. This creates a useful real-world stress test in suspicious interactions. For example you might say "Oh perfect, I was just waiting for a random bank call to sort out my finances, this feels totally normal." A real human agent will usually acknowledge your concern and clarify legitimacy. AI systems, however, often respond literally or continue the script without recognising the tone - for example, "Thank you for confirming. For security purposes, please verify your account details so we can proceed."

You can also test absurd compliance humour, which scam attempts often rely on victims not questioning. For instance, "Before we continue, should I also give you my PIN and my favourite colour while I'm at it?" A human will typically correct the misunderstanding while AI-driven scam systems may ignore the joke entirely or respond as if it were part of a genuine security confirmation process.

-

Emotional, financial cost of long-distance love drives couples to move in together

-

The Met’s ‘Costume Art’ exhibit brashly transforms flesh, bones and guts into couture

-

Chinese actress Tang Wei announces second pregnancy with South Korean husband at 47

-

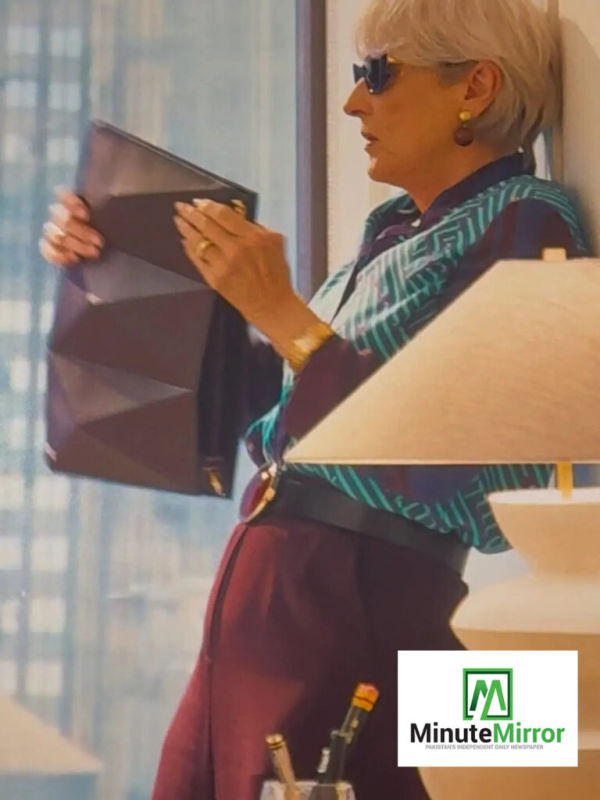

Pakistani brand Warp featured in ‘The Devil Wears Prada 2’ worn by Meryl Streep

-

Do not throw away the watermelon peel considering it useless, instead make delicious pudding from it.