Listen to this article in summarized format

Loading...

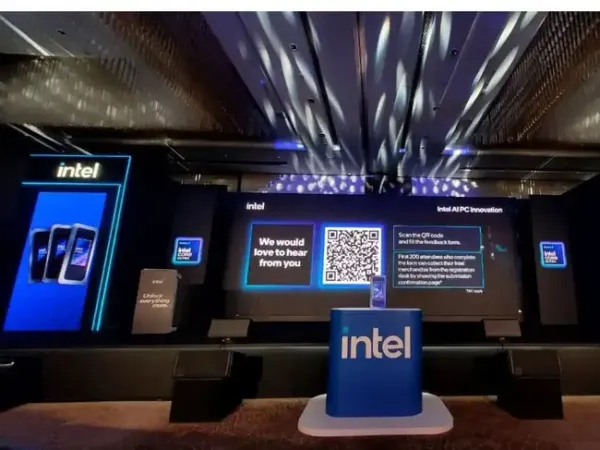

×Last week, Intel hosted its AI PC Innovation Day at Conrad Bengaluru, bringing together developers, industry stakeholders, and ecosystem partners to outline the next phase of personal computing. The central theme, “Unleashing On-Device Intelligence,” reflected a broader industry shift, moving AI workloads away from the cloud and onto local hardware.

This transition is not just about performance gains. It signals a deeper change in how AI is expected to function in everyday computing, with increasing emphasis on latency, privacy, and offline capability.

Equally important is the privacy angle. With data processed directly on the device, sensitive information does not need to be transmitted to external servers. This has clear implications for enterprise, government, and regulated industries where data security remains a priority.

The positioning here is clear. Unlike traditional AI models that rely heavily on cloud infrastructure, BharatGPT Mini 2 is built for local execution. This enables core tasks to function without internet access, while also ensuring that user data remains confined to the device.

From a performance standpoint, the model is engineered for low-latency responses, particularly across multiple Indian languages. This is a notable step in making AI more relevant and accessible within the domestic ecosystem, where language diversity remains a key factor.

Among the devices on display was the much-anticipated LG Gram lineup, which continues to focus on lightweight design while now integrating AI-driven performance enhancements. The presence of such devices underlined how OEMs are aligning with Intel’s push towards AI-first PCs.

A key focus area across these demonstrations was power efficiency. Dedicated AI silicon allows complex workloads such as content creation and real-time processing to be handled more efficiently, which in turn helps extend battery life. As AI becomes more integrated into everyday workflows, this balance between performance and efficiency will be critical.

This approach is critical for scaling adoption. By providing tools that simplify the transition to on-device AI, Intel is attempting to lower the barrier for developers looking to build locally optimised applications.

The discussions at Intel AI PC Innovation Day point towards a clear industry trajectory. As AI becomes more embedded in daily workflows, the balance is gradually shifting towards hybrid and on-device models that prioritise speed, privacy, and efficiency.

For markets like India, where connectivity can be inconsistent and data sensitivity is increasingly important, this approach carries added relevance. Solutions such as BharatGPT Mini 2 indicate that localisation, both in terms of language and infrastructure, will play a key role in shaping the next phase of AI adoption.

In that context, the move towards on-device intelligence is not just a technical evolution, but a structural shift in how personal computing is being redefined.

This transition is not just about performance gains. It signals a deeper change in how AI is expected to function in everyday computing, with increasing emphasis on latency, privacy, and offline capability.

The Shift to On-Device AI

A key takeaway from the event was Intel’s focus on enabling AI directly on PCs through dedicated hardware components such as Neural Processing Units (NPUs). By handling AI tasks locally, these systems aim to deliver faster response times while reducing dependence on constant internet connectivity.

Intel AI PC Innovation Day 2026 at Bengaluru: A key takeaway from the event was Intel’s focus on enabling AI directly on PCs through dedicated hardware components

Equally important is the privacy angle. With data processed directly on the device, sensitive information does not need to be transmitted to external servers. This has clear implications for enterprise, government, and regulated industries where data security remains a priority.

BharatGPT Mini 2: Built for Local Intelligence

The highlight of the event was the showcase of BharatGPT Mini 2, a model designed to run entirely on-device and optimized for Intel’s latest NPU architecture.The positioning here is clear. Unlike traditional AI models that rely heavily on cloud infrastructure, BharatGPT Mini 2 is built for local execution. This enables core tasks to function without internet access, while also ensuring that user data remains confined to the device.

From a performance standpoint, the model is engineered for low-latency responses, particularly across multiple Indian languages. This is a notable step in making AI more relevant and accessible within the domestic ecosystem, where language diversity remains a key factor.

Hardware and Efficiency Gains

Intel also used the platform to demonstrate next-generation AI PCs, with hands-on sessions showcasing a range of devices equipped with integrated AI acceleration. These included multiple upcoming laptops from leading OEMs, highlighting how AI capabilities are being embedded directly into consumer hardware.Among the devices on display was the much-anticipated LG Gram lineup, which continues to focus on lightweight design while now integrating AI-driven performance enhancements. The presence of such devices underlined how OEMs are aligning with Intel’s push towards AI-first PCs.

A key focus area across these demonstrations was power efficiency. Dedicated AI silicon allows complex workloads such as content creation and real-time processing to be handled more efficiently, which in turn helps extend battery life. As AI becomes more integrated into everyday workflows, this balance between performance and efficiency will be critical.

Building the Developer Ecosystem

Beyond hardware and models, Intel highlighted its efforts to strengthen the developer ecosystem. Workshops around its OpenVINO toolkit focused on enabling developers and startups to optimise and deploy AI models directly on PCs.This approach is critical for scaling adoption. By providing tools that simplify the transition to on-device AI, Intel is attempting to lower the barrier for developers looking to build locally optimised applications.

A Broader Industry Direction

The discussions at Intel AI PC Innovation Day point towards a clear industry trajectory. As AI becomes more embedded in daily workflows, the balance is gradually shifting towards hybrid and on-device models that prioritise speed, privacy, and efficiency.

For markets like India, where connectivity can be inconsistent and data sensitivity is increasingly important, this approach carries added relevance. Solutions such as BharatGPT Mini 2 indicate that localisation, both in terms of language and infrastructure, will play a key role in shaping the next phase of AI adoption.

In that context, the move towards on-device intelligence is not just a technical evolution, but a structural shift in how personal computing is being redefined.

Subscription

Subscription