Why Hong-Kong bankers of Goldman Sachs can't use Anthropic's models

29 Apr 2026

Goldman Sachs has barred its Hong Kong-based bankers from using Anthropic's artificial intelligence (AI) models.

The decision comes as global financial institutions are becoming more cautious about AI tools due to increasing concerns over data security.

Employees of the US bank in Hong Kong were previously able to use Anthropic's Claude via an internal AI platform.

However, access has been revoked in recent weeks, a source familiar with the matter told Reuters.

Decision based on strict interpretation of contract with Anthropic

Contractual concerns

The decision by Goldman Sachs was taken after a strict interpretation of its contract with Anthropic.

The bank consulted the company and concluded that its employees in Hong Kong shouldn't use any of Anthropic's products.

This move is part of a broader trend among global banks and financial regulators, who are wary of potential risks posed by advanced AI models like Anthropic's latest offering, Mythos.

Other AI tools still accessible to Goldman Sachs employees

Access continuity

Despite the restriction on Anthropic's AI models, other mainstream tools like Gemini and ChatGPT are still available on Goldman Sachs's internal platform.

The move to restrict access in Hong Kong comes amid rising tensions between the US and China over AI technology, data security, and access to advanced computing tools.

Speculation about other banks limiting access

Market position

Notably, Anthropic's API and Claude.ai are not officially available in Hong Kong, according to the company's disclosure. The move by Goldman Sachs has sparked speculation about whether other banks or companies will follow suit in limiting access to advanced AI models in the region.

Concerns over Chinese companies using American AI models

Competition fears

The restriction of Anthropic's AI model in Hong Kong by Goldman Sachs comes amid fears that Chinese companies are using American AI models to train their own systems at a lower cost.

This practice, known as AI distillation, has been flagged by several US tech giants.

In February, OpenAI told US lawmakers it had caught DeepSeek trying to secretly copy its most powerful AI models, and warned that the firm was developing new methods to disguise its actions.

-

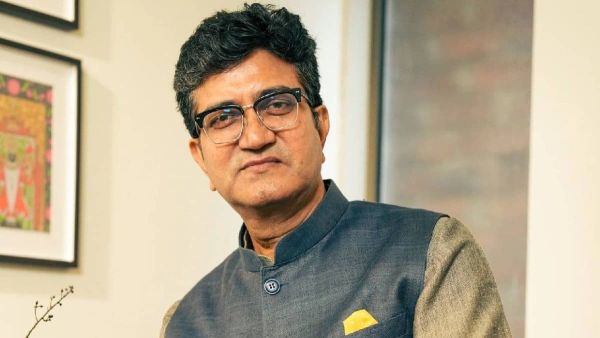

Lyricist Prasoon Joshi Appointed Chairman of Prasar Bharati

-

NASA Radar Just Captured Mexico City Sinking Into the Ground After a Century of Unstoppable Collapse

-

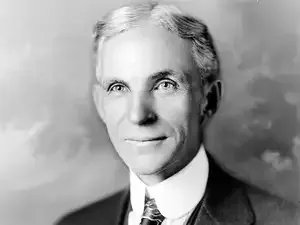

In the early 1900s, Henry Ford Observed Meatpacking Disassembly Lines: That Insight Led to the Automobile Assembly Line

-

Sam Altman invites Elon Musk to OpenAI’s GPT-5.5 private event amid ongoing legal dispute

-

Sam Altman sets out OpenAI's three key focus areas for next phase of growth